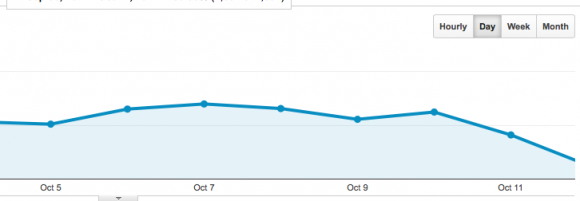

It’s the situation that every webmaster or SEO person dreads, they login to their Google Analytics account only to see that their traffic has nose dived into oblivion. Now is not the time for panicking though! Websites and how they acquire traffic are a lot more complex than they used to be so finding the source of the problem needs to be tackled correctly and methodically, here’s a short guide on some of the main areas you should be investigating.

Look Closely At Traffic Source Changes

First thing you want to do when you see the traffic dip is to find out the exact source, go to Acquisition > Overview and check all your traffic sources, if its an SEO issue then your organic traffic will be down. If you have a lot of referral traffic from other sites or social media platforms and this is down then make sure any ads or links you have there are still up and running.

Inspect On Page Elements

Is the title tag still the same and all the other meta information correct? For high traffic/highly competitive terms changing an optimized title tag can cause a page or site to plummet from page one of the rankings and all your organic traffic will go with it. Other things to look out for are noindex tags and nofollow tags for your inner page links. If all the links to your inner pages are no followed then Google bots can not follow these links and they will lose their rankings. H1 & H2 tags are also very important and something as simple as removing a target keyword from these can cause a page to suffer.

Login to Google Webmaster Tools

Here you need to check your site messages, if you have received a manual Google penalty you should have a message here, note that many Google penalties do not come with a warning though e.g. Panda. Then check the following:

Index status – here you can see how many pages on your site Google has indexed, a sudden drop in these means there is a problem.

Crawl errors – if your site has experienced any down time or has too many 404 pages this will have a major affect on not just how Google sees your site but can also cause users to leave and not come back!

Robots.txt status – just like with on-page elements the robots.txt file can be very powerful and can cause a huge amount of problems if not setup correctly. Make sure that the Googlebot is allowed to crawl the correct pages and parts of your site using the tester tool. Here’s a fantastic guide from YOAST on how to correctly configure a robots file.

Check Any Page Redirects

If you had any pages setup to redirect elsewhere then make sure all those 301s are still in place, if any have them have been removed or broke then all the traffic going to those pages is going to see a big, fat 404 page, too many of these and Google will severely penalize your site!

These are just some of the main things you should do as your first port of call when investigating a traffic drop but there are many other things ranging from canonical tags being removed to footer and navigation links breaking that can cause your site to crash. Most if not all of these problems are brought on by humans making changes to your site which is why its crucial to keep a log of all changes and edits you or your team make to your website.

17 thoughts on “Identifying Traffic Drop Causes”

This was a nice post . Shared a lot of more and more details. Other things to look out for are no index tags and no follow tags for your inner page links.

Thanks David for sharing traffic related good blog to read.

Nice post David!

Traffic is the most important part of any website . Thanks for sharing!

Been on the Internet for 15+ years and never heard of you before. Nice read.

I follow your blog for a long time and everything you share is high quality . Thank you very much!

Hello, David! Great and very informative guide to identify the causes of traffic drop. It helps me a lot to figure out some analytical issues with my website. The areas that you mentioned in the post are very important from the SEO point of view, one should always take care of these points so that your website should be loved by search engines.

Thanks for sharing and keep doing the good work:)

The blog you shared is really good. This blog will help a lot who work as SEO analyst. I agree with all your points. Good work and Keep Sharing…!

There’s nothing like that sick feeling in your stomach when you notice your traffic has dropped and then you go to check your rankings… on the other hand, there’s nothing like the joy you feel when you discover it was just a messed up tracking code or something 😉

Good sleuthing – you’ve done a really good job explaining how it takes a few tools (& skillsets) to correctly find root cause of analytics issues.

When we updated our site we used a new CMS (drupal). Previously all our URL’s had essentially been junk, the name of our website/the name of the CMS/lots of numbers, letters and symbols. Now the URLs are the site name/page name, but our search traffic has dropped by half. Would this have been the cause or should I be looking elsewhere?

hi,

thanks for sharing a helpful article.

this article is very helpful for me because i am working with SEO .

Here are a couple more causes of traffic drop:

1. Competitors beats you in SERP’s

2. Seasonal changes in search volume.

Technically I agree to the post while there could be many other causes to the traffic drop like in case of malware attacks, infrequent site updates, etc..

If you have a website and you’re using SEO for it, then it’s really important to monitor its progress not just the keywords but also the traffic. Ask yourself if all your strategies are working and if it reaches your goal. Yes, a goal is important and if it’s not working, then something is not right. You show us one of the most important thing that we must do in monitoring traffic. Thanks for that.

Thank you for everything. I was an SEO staff and I really am having these problems. I like your website. I think I’ll visit it regularly calves.

I’ve been trying to figure out my issues as well. It’s on two sites after a host transfer, database nightmare. I hope it recovers soon. Thanks for the post

Hi,

Nice post to technically identify the traffic drop, it may cause in case of site doesn’t update so long. Google seeks your web pages updated and refreshed, and not idle. I liked this article, thanks for sharing 🙂

Comments are closed.